Contents

If shit is really broken

You should contact the NOC team.

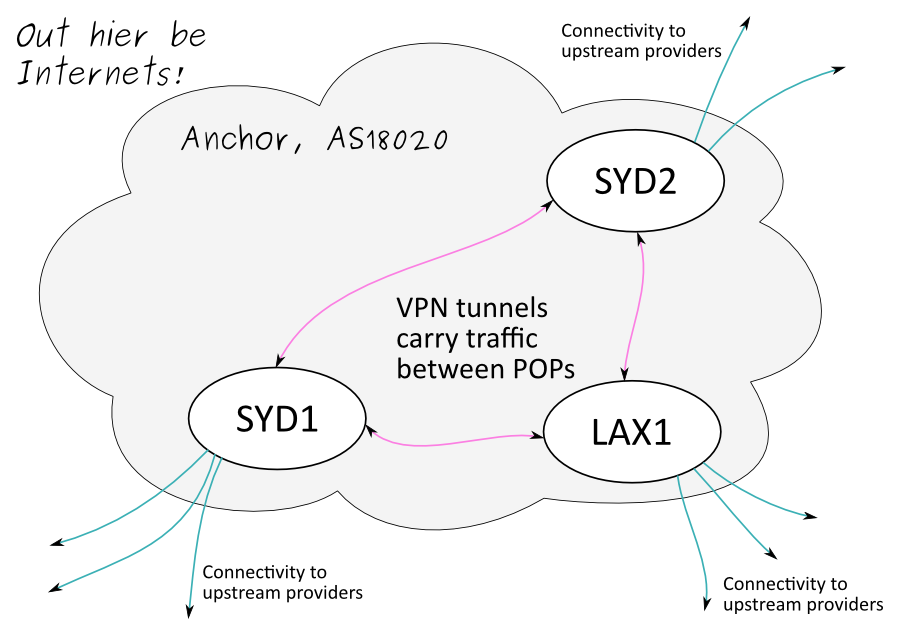

High-level diagram

POPs

Anchor maintains a few points of presence (POP), one of which is our headquarters in the Sydney CBD.

Address space

IP v4

IP v6

2407:7800::/32 » Details

Autonomous System

Anchor has an AS number, it's 18020. This is used for our BGP routing and lets us get on the interwebs.

Routing

The basics:

We have a few POPs inside the "Anchor cloud" (our Autonomous System)

OSPF gets traffic between POPs

BGP gets traffic in and out of the Anchor cloud

Dynamic routing is all about advertising your presence - rather than setting up static routes on every system, you advertise yourself and other systems will figure out how to reach you

Why we need to route

Anchor has multiple connections to the outside world at each POP for redundancy. If a connection fails (eg. backhoe causality incident) we still have connectivity. Routing protocols handle the failover for us, resulting in a minimum of downtime.

Routing also gives us some flexibility in addressing, as we can advertise chucks of address space from each POP where it's in use.

How we make use of BGP

BGP is primarily an exterior routing protocol, between autonomous systems. BGP is also often used inside an AS so that border routers can have a consistent picture of connectivity to the outside world. When used in this way it's called IBGP (Interior or Internal BGP).

Exterior routing

The border routers at each POP advertise part of Anchor's address space to the internet, via our upstream providers at each POP. The border routers are referred to as "BGP speakers", and BGP is spoken along the cyan-coloured links in the diagram above.

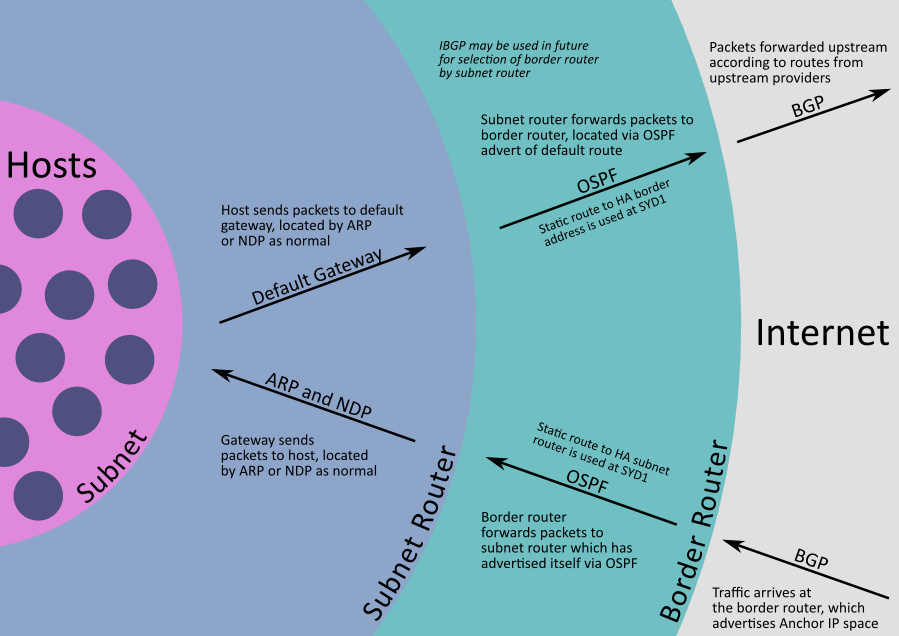

The working behind this is roughly » as follows

Note that a given chunk of address space (eg. a /24) can be advertised from multiple POPs. This is not a problem, so long as traffic can be correctly routed to its destination once it's inside the AS.

Internal routing

We already have an interior routing protocol (OSPF), so why do we need to use BGP internally as well? We run IBGP in a limited fashion within our AS, it basically gives border routers options for egressing the AS.

At the time of writing, IBGP is used almost exclusively within each POP, and is not represented on the diagram above.

A non-representative scenario

This is a generic example of why IBGP is useful. We do not use IBGP » like this though.

A realistic scenario

We use IBGP between the border routers at each POP. Consider the » following example

How we make use of OSPF

We use OSPF to get packets between POPs. The alternative is to maintain static routes at each POP, which has substantial management overheads and would be error-prone.

Between POPs

OSPF routers are always "adjacent" to each other. In an ideal world this would be a direct fibre connection. The harsh reality is that we use VPN tunnels between POPs, as described in the next section. These are the pink-coloured links in the diagram above.

Within a POP

At the time of writing, we're not doing this but it's on the cards for the future.

FIXME: finish this next

Advertising subnets "up" to our border routers.

Use of routing at each POP

Inter-POP connectivity

We use tunnels to carry inter-POP traffic. This is fantastic because it allows our privately-addressed networks (RFC1918) to be accessed from anywhere in the Anchor network. On the downside, it means we need to be careful when allocating new privately-addressed networks.

We use OpenVPN and IPsec tunnels depending on the available networking infrastructure at each POP. Use of OSPF between POPs means we can run a full mesh of tunnels if we want to, and everything should keep working in the event of failures.

Addressing policy

Private addressing is a bit of a mess

https://map.engineroom.anchor.net.au/BackupNetworkAddressingPolicy

Edumacation

Fukken <x> how does it work!?

Per-POP stuff

We should develop a template for all the information you expect to find for each POP. Structure will be something like /PoP/$popname/foo

Connectivity (upstream providers)

Physical environment

Backdoor access

Network infrastructure (hardware, how it's deployed, what software it runs, local network architecture)

Naming policy (eg. VLANs in FQDNs)

Local addressing policy? Don't duplicate anything from the global scope

Access and delivery arrangements

Local routing policies (eg. BGP path prefixes, any funky processing that we do)

Provisioning procedures

Resource allocations (power, network ports)

Troubleshooting? Ideally shouldn't be POP-specific